|

DISCLAIMER:

This is an experimental plugin. It is not production ready or proven, nor a viable, fact based method in its current stage.

This plugin WILL go through several iterations in the comming year.

The whole plugin is a big question mark, and consist of a lot of made up elements.

If you are in search for absolute hdri values for rendering other sources might - or better said: ARE more viable.

Renember this is just an public experimental playground, and this tool defintively fall in that category.

If someone has a better insight how to calculate the values please DO share them in the forum.

My main projects need more attention in the comming months, so I don't have time for extensive research as for now

Ok now that this is clear on with the text.

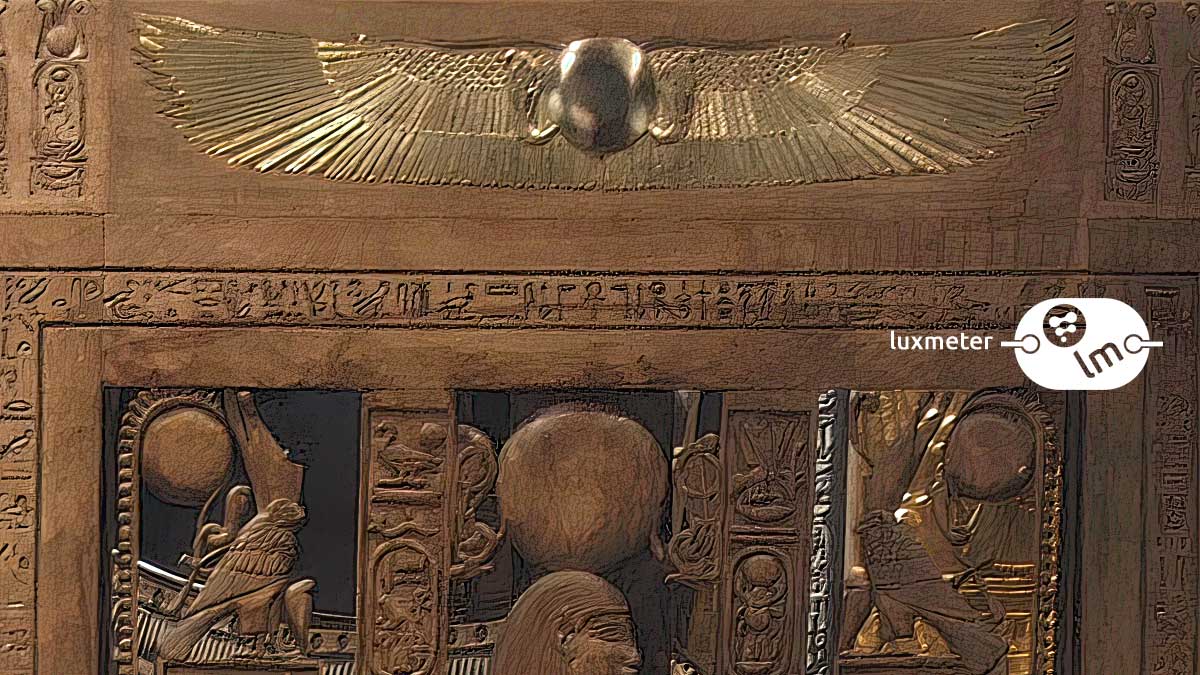

Reading this pdf and the correlation of the luxmeter readout with the upper hemisphere I got inspired by the pseudo code in the document (page 66) to write a fusion node plugin. (Basically a "see what happens")

Usage (in theory): You feed the hdri into the input node and enter the luxmeter readout wich then gets correlated and outputs an hdri with real world values.

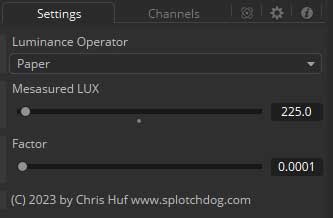

Luminance Operator

paper / mine

the method for calculating the luminance of the hdri using the upper half of a latitude longitude projected hdri

paper is the method described in the pdf / mine is my modifications to it, more on this later.

Measured LUX

The luminance measured by a luxmeter at the position of camera facing upwards

Factor

A correction factor wich is multiplied to the result Use a value of 1.0 to get the results of the paper.

The problem: Those values are a factor of 10000 to high for rendering programs.

Since the paper also states that the photoshop method results in a white image, I think this is actually the output they want to archive.

So for rendering purposes I added a correction factor with the default of 0.0001 to the plugin interface to get values wich somewhat resembles the output you would expect for rendering.

This is the first thing I made up wich does not have any "scientific" background.

If you want the the output of the paper just use a 1.0 in the correction factor. Or you find a factor wich works in your workflow better.

The second thing I'm a bit uncertain is the division of the sum of the pixel by the complete width and height of the panorama:

Shouldn't it be the half the height ? I've kept the full height for the paper method, to provide people with the output of the paper.

But for me this seems wrong....but hey these people got more experience in that regard, so I go with their proposed code.

The second thing I made up....aka I'm uncertain with, is the overall data accumulation. The pseudo code uses the average of the upper hemisphere for calculation. While as far as I understand, the luxmeter uses the sum of all incomming rays. The above code will not preserve small highlights in a dark room, wich still affects a luxmeter.

To simulate that, I made up my own method by splitting up the upper heisphere of the hdri in 100x50 areas and take the average of each area individually. Those values are then ADDED to the final output.

(Its the "mine" option in the method popup, while "paper" is the version of the pdf).

I then correlated the output of the second method to match the first by multiplying the sum by 2000.

Basically I made up a correction factor and to keep output consitent between both methods.

So yeah, a lot of "made up" stuff in the code. And I'm not happy with the current output. Again, if you got any insights on this, write a comment in the forum, or mail me.

This isn't a critique on the paper btw, they are propably more right, than me.

But in general, I do think bypassing the primarly optical system and "calibrating" an hdri based on the luxmeter readout for rendering has some usage scenarios. If this method develops into a viable method, you could theoretical bypass complex camera calibrations and have an additional fallback if other methods do not work out. But this plugin is in its first infant stages, and if you have some information please do not hesitate to share to make this method more viable.

This will definitive get some updates in future.

|